Understanding The Real Challenges Of Testing Microservices

Testing microservices presents unique challenges compared to testing monolithic applications. Traditional testing methods often struggle to keep up due to the distributed nature of microservices. Understanding these challenges is crucial for a robust testing strategy.

The Complexity of Distributed Systems

One primary challenge is the inherent complexity of distributed systems. Unlike monolithic applications where all components reside together, microservices operate as independent units. This creates complexities in managing service dependencies, ensuring seamless communication between services, and handling potential network failures. A failure in one service, for instance, could trigger a domino effect across the system if dependencies are not managed effectively.

The sheer number of services within a microservices architecture also adds to the complexity. Testing every possible interaction becomes increasingly difficult as the system scales. This requires a shift from exhaustive testing to more focused, risk-based approaches. A significant 85% of modern enterprise companies are now managing complex applications with microservices, highlighting the widespread adoption of this architecture. To delve deeper into this trend, explore this article.

Traditional Testing Approaches Fall Short

Traditional testing approaches, often focused on end-to-end testing, are not ideal for microservices. The interconnected nature of microservices makes isolating individual services for testing difficult. End-to-end tests can also be slow and fragile, unsuitable for continuous integration and delivery pipelines. This necessitates the adoption of new testing strategies like contract testing, component testing, and service virtualization.

The Need for a New Mindset

Effective microservices testing demands a shift in mindset. Teams need to adopt a more collaborative approach, with developers, testers, and operations working together closely. This involves establishing clear communication, shared testing responsibilities, and a culture of quality throughout the development lifecycle.

Increased automation is also essential for managing the complexity and speed of microservices development. Tools like GoReplay can be incredibly helpful for capturing and replaying real-world traffic, creating realistic testing scenarios. This forms the foundation for robust and efficient testing practices within a microservices architecture.

Building Your Testing Strategy That Actually Works

Effective testing is crucial for any microservices architecture. A robust strategy requires more than just running tests; it demands a multi-layered approach that acknowledges the interconnected nature of these services. This section outlines a practical, proven strategy, from unit testing to end-to-end testing, to create a reliable testing ecosystem.

Layered Testing Approach for Microservices

A successful testing strategy starts with a solid foundation of unit tests. These tests isolate individual services, ensuring each functions correctly on its own. Think of it like testing individual components of a machine before assembling the entire unit.

Integration tests build upon this foundation, verifying the interactions between a service and its external dependencies, such as databases or message queues. This is similar to ensuring that all the parts of a machine work together correctly. Component tests expand this scope further, assessing groups of related services as a unit.

Contract testing serves as a critical checkpoint, verifying that communication between services adheres to pre-established agreements. Finally, end-to-end tests simulate real-world user scenarios, confirming the entire system works cohesively. This ensures the entire machine operates smoothly under realistic conditions.

The increasing adoption of microservices has highlighted the importance of diverse testing strategies. Performance testing, end-to-end testing, and contract testing are particularly important for microservices. Learn more about these testing strategies here.

Balancing Coverage and Speed

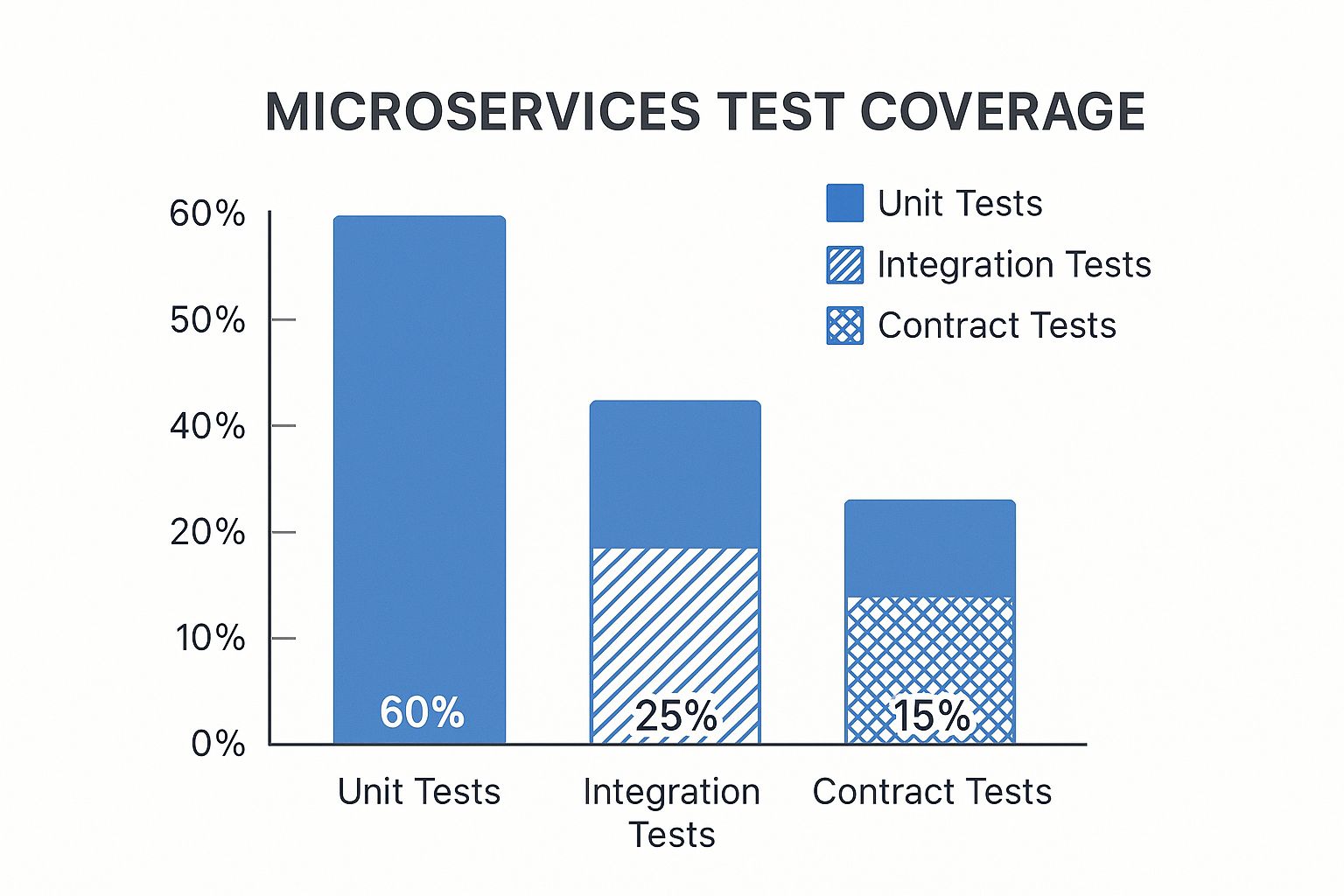

The infographic below visually represents the typical test coverage across different test types within a microservices architecture:

The infographic demonstrates that while unit tests typically have the highest coverage (60%), contract testing (15%) and integration tests (25%) play a vital role in overall quality assurance. This emphasizes the importance of a balanced approach where each test type contributes to a thorough testing strategy.

Finding the right balance between test coverage and execution speed is essential. While comprehensive testing is desirable, it can be time-intensive. Prioritize tests based on risk and potential impact. Focus on areas of high complexity or frequent changes, ensuring critical paths are thoroughly tested. Tools like GoReplay can improve testing efficiency by capturing and replaying real-world traffic.

To further clarify the different testing strategies and their application, let’s examine a comparison table:

To understand the strengths and weaknesses of each testing approach, the following table provides a comparison:

Microservices Testing Strategies Comparison: Comparison of different testing approaches, their benefits, challenges, and ideal use cases

| Testing Type | Primary Purpose | Complexity Level | Execution Speed | Best Use Case |

|---|---|---|---|---|

| Unit Testing | Verify individual service functionality | Low | Fast | Testing individual components or functions in isolation |

| Integration Testing | Test interactions between services and external dependencies | Medium | Moderate | Validating interactions with databases, message queues, or external APIs |

| Component Testing | Assess a group of related services | Medium-High | Moderate | Testing a specific feature or workflow that spans multiple services |

| Contract Testing | Ensure communication between services adheres to agreements | Medium | Fast | Verifying API compatibility and preventing breaking changes |

| End-to-End Testing | Simulate real-world user scenarios | High | Slow | Testing the entire system flow and user experience |

This table summarizes the key characteristics of each testing type, allowing for a more informed selection based on the specific needs of the microservice architecture.

Implementing Your Testing Strategy

Start with a clearly defined testing plan. Outline the scope of each test type, identify important metrics, and assign clear responsibilities. Use automation tools to streamline the process and enable continuous testing within your CI/CD pipeline. Tools like GoReplay, for example, can be integrated into your CI/CD workflow to replay production traffic against new code changes, allowing for early detection of potential problems.

Regularly review and adapt your testing strategy as your system grows and changes. Microservices are dynamic, so your testing approach should be flexible as well. By using a multi-layered, balanced approach, and the right tools, you can create a testing strategy that effectively ensures the quality and reliability of your microservices architecture.

Mastering Test Automation For Distributed Systems

Manual testing becomes impractical when dealing with dozens or even hundreds of interconnected services in a microservices architecture. At this scale, test automation is no longer a luxury, but a necessity. Teams are increasingly adopting automation tools designed for the complexities of distributed systems.

The Power of Codeless Automation

Codeless automation platforms are empowering a wider range of team members to participate in test creation. Testers, business analysts, and even product owners can now create and manage automated tests without needing extensive coding skills. This democratization increases efficiency and broadens the perspectives applied to test coverage.

For example, a business analyst can validate a user journey across multiple services. Using a codeless platform, they simply define the steps and expected outcomes without writing any code. This accelerates testing and reduces reliance on specialized automation engineers.

Leveraging AI-Powered Testing Tools

AI-powered testing tools are gaining popularity for their ability to reduce system downtime and proactively identify issues. These tools utilize machine learning algorithms to analyze data, recognize patterns, and predict potential failures.

Furthermore, AI can dynamically adjust test cases based on system changes, making tests more resilient and adaptable to the constant evolution of microservices. This proactive approach minimizes the risk of production incidents and improves software quality. You might be interested in: How to master API tests with automation tools and strategies.

Building Continuous Testing Pipelines

Continuous testing pipelines are essential for managing the rapid deployment cycles common in microservices. These pipelines automate test execution throughout the development lifecycle, validating every code change before deployment. The result is faster feedback and quicker time to market.

Maintaining test reliability and managing test data across numerous services presents a significant challenge. Strategies like service virtualization and test data management tools are key to establishing stable and predictable testing environments. By using these strategies, teams can ensure their tests are comprehensive and efficient, building confidence in the quality and reliability of their microservices.

Solving The Service Dependency Puzzle

Testing microservices presents a unique set of challenges, especially when it comes to managing service dependencies. How can you effectively test a service that relies on other services, particularly when those dependencies are still in development, managed by different teams, or simply unavailable in your test environment? This intricate web of dependencies can quickly become a bottleneck.

Strategies for Handling Dependencies

Thankfully, several strategies can help unravel this complex dependency situation. One common approach is service mocking. This involves creating stand-in versions of dependent services that return pre-programmed responses. Imagine it like using a stunt double in a film – it fills in for the real actor (service) during certain scenes (tests).

Test doubles, like stubs and mocks, provide more control over the simulated behavior. Stubs deliver canned responses, while mocks let you specify particular expectations and verify interactions. These techniques enable isolated testing, verifying that your service operates correctly regardless of the state of its dependencies.

Another effective technique is service virtualization. This involves simulating the behavior of dependent services within a virtual environment, offering a more realistic testing scenario than basic mocking. It’s like constructing a film set that meticulously represents the real location, facilitating more authentic testing.

Isolated Test Environments and Test Data

Creating isolated test environments is paramount for dependable testing. By isolating your service and its dependencies, you eliminate outside factors that could influence test results. For instance, if your service interacts with a database, you might use a separate test database to avoid impacting production data. This guarantees consistent and reproducible tests.

Managing test data across a distributed system is another crucial factor. Each service might require its own test data, and keeping this data synchronized can be a challenge. Strategies like data masking and test data generation can help create and manage test data effectively. This is comparable to ensuring the correct props are on hand for each scene, creating a believable and consistent setting.

Coordinating Testing Efforts Across Teams

When services are distributed across multiple development teams, coordination is essential. Service contracts establish the agreed-upon interactions between services, providing a common understanding. Testing against these contracts helps avoid breaking changes and ensures seamless integration. Think of it as a detailed script that everyone agrees upon, reducing unexpected issues during filming.

Even with thorough planning, breaking changes can still occur. Techniques like consumer-driven contract testing help catch these changes early. By allowing consumers to define their expectations, providers can make sure their changes don’t adversely affect dependent services. This is similar to having the director (consumer) approve any script modifications to maintain the story’s integrity. By employing these strategies, you can effectively manage service dependencies, build reliable testing environments, and coordinate testing across different teams, ultimately producing more resilient and dependable microservices.

Performance Testing When Everything Is Distributed

Performance testing takes on a whole new level of complexity when you’re dealing with a microservices architecture. Instead of one self-contained application, you have a network of interconnected services, each with its own quirks and performance characteristics. This distributed nature throws some curveballs into the testing process.

Challenges of Distributed Performance Testing

One of the biggest hurdles is network latency. Communication between services, especially across a network, inevitably introduces delays. Even small delays in one service can snowball, causing noticeable slowdowns across the entire system. This makes pinpointing performance bottlenecks much trickier.

Another layer of complexity comes from the overhead of service-to-service communication. Each interaction between services has a cost. As the number of services and interactions grows, this overhead can become a significant performance drain. Think of it like adding extra steps to a production line – each step, no matter how small, adds to the total time and cost.

Perhaps the most daunting challenge is the risk of cascading failures. A single failing service can trigger a chain reaction, affecting other dependent services and potentially crashing the whole system. Robust performance testing needs to account for these failure scenarios and ensure the system can handle them gracefully.

Load Testing vs. Stress Testing

When testing microservices performance, you’ll face a choice between load testing and stress testing. Load testing simulates real-world user traffic to see how the system performs under normal conditions. It’s like seeing how a bridge handles everyday traffic flow.

Stress testing, on the other hand, pushes the system past its limits. The goal is to identify breaking points and see how the system recovers. Imagine gradually increasing the weight on that bridge until you find its limit.

The best approach depends on your objectives. Load testing ensures your system can handle the expected traffic, while stress testing reveals its resilience and capacity for dealing with unexpected surges or failures.

Identifying Bottlenecks

Finding bottlenecks in a distributed system requires a different mindset than with traditional monolithic applications. Traditional profiling tools might not be as effective because the bottleneck could be in the communication between services, not within a single service. Check out this helpful article: How to perform accurate sessions in performance testing.

Distributed tracing can be a game-changer here, allowing you to follow requests as they flow through the system. Tools like GoReplay can also be invaluable, capturing and replaying real traffic to pinpoint performance issues.

Metrics That Matter

Finally, focus on the key performance metrics that are important in a production environment. While classic metrics like response time and throughput are still relevant, other factors become even more critical in a distributed setting. These include service availability, error rates, and recovery time.

Effective performance testing for microservices requires a deep understanding of the challenges inherent in distributed systems. By using the right strategies, tools, and focusing on the most important metrics, you can make sure your microservices architecture is reliable and efficient in the real world.

Smart Testing Solutions That Won’t Break The Budget

Comprehensive testing for microservices doesn’t have to drain your budget. Smart teams achieve robust test coverage while managing costs through strategic tool selection and efficient processes. This means you can prioritize both quality and affordability. This section explores how to strike this balance, focusing on tools and techniques that maximize your return on investment (ROI).

Budget-Friendly Testing Tools and Techniques

Cost-effective testing doesn’t mean sacrificing quality. Many open-source tools offer powerful capabilities for testing microservices. Tools like GoReplay allow you to capture and replay real-world traffic, creating realistic test scenarios without the high cost of commercial solutions. This approach ensures thorough testing under real-world conditions.

Consider a hybrid approach, combining open-source tools with specific commercial solutions for specialized needs. For instance, you might use an open-source tool for unit and integration testing and invest in a commercial JMeter performance testing tool for more in-depth analysis and reporting. This targeted investment strategy optimizes cost-effectiveness.

The Financial Impact of Effective Testing

The cost of not testing effectively can significantly outweigh the investment in testing itself. Production failures can lead to downtime, lost revenue, and damage to your reputation. A solid testing strategy minimizes these risks and helps prevent costly disasters. Furthermore, efficient testing processes lead to faster development cycles and reduced time to market.

For example, using serverless computing can significantly reduce operating costs. Companies only pay for the functions executed, potentially saving up to 30% on operating costs. Explore this topic further here. These cost savings free up resources for other essential initiatives.

Measuring and Optimizing Your Testing ROI

To demonstrate the value of testing to stakeholders, you need clear metrics. Track metrics such as the number of defects found before release, the reduction in production incidents, and the time saved through automation. These data points showcase the impact of your testing strategy and justify your investment.

To help you further analyze the costs and benefits of various testing tools, we’ve compiled the following comparison table:

Introduction to table: The following table provides a cost-benefit analysis of several popular microservices testing tools, comparing their features, suitability for different team sizes, and potential ROI timelines.

| Tool/Platform | License Cost | Key Features | Team Size Suitability | Estimated ROI Timeline |

|---|---|---|---|---|

| GoReplay | Open-source | Real-world traffic replay, shadow testing | Small to Large | Short-term |

| JMeter | Open-source | Load testing, performance testing | Small to Large | Short to Mid-term |

| Postman | Freemium/Paid | API testing, collaboration features | Small to Large | Short-term |

| Katalon Studio | Freemium/Paid | UI testing, API testing, test automation | Medium to Large | Mid-term |

| SoapUI | Open-source/Paid | API testing, security testing | Small to Large | Short to Mid-term |

Conclusion of table: As the table shows, open-source tools like GoReplay and JMeter can provide significant value with a quick ROI. While paid tools may offer advanced features, consider your specific needs and budget when making a decision.

Regularly review and optimize your testing processes. Focus on identifying and eliminating redundancies, automating manual tasks, and prioritizing high-risk areas. These process improvements will lead to greater efficiency and an improved ROI. By making informed choices and continuously refining your testing strategy, you can ensure comprehensive test coverage for your microservices without overspending.

Your Roadmap To Testing Success

Want to boost your microservices testing strategy? This roadmap offers practical guidance tailored to your team’s resources, limitations, and objectives. It starts with understanding your current setup and translating business goals into testable criteria. We’ll also explore how to prioritize testing based on risk and impact, focusing on practical application over theoretical ideals.

Prioritizing Testing Initiatives

Forget aiming for 100% test coverage. It’s often unrealistic. Instead, prioritize tests based on the potential fallout of failure and the probability of problems occurring. For instance, a vital service processing financial transactions demands more stringent testing than one displaying static content. This risk-based method optimizes your testing efforts and makes the most of your resources.

Also, factor in how often different services change. Services with frequent updates need more regular testing to catch regressions quickly. This proactive approach minimizes the chance of bugs reaching production.

Establishing Testing Standards

Consistency is paramount in a microservices architecture. Clear testing standards across development teams ensure a consistent level of quality and simplify integration. This involves establishing common testing frameworks, tools, and metrics.

For example, all teams could use the same unit testing framework, like JUnit for Java or NUnit for .NET. They could also adopt a shared tool for contract testing, such as Pact. Shared tools and frameworks facilitate collaboration and knowledge transfer.

Avoiding Common Implementation Mistakes

One frequent mistake is overlooking contract testing. Without confirming that services stick to agreed-upon contracts, breaking changes can slip through, leading to integration headaches and system instability.

Another trap is inadequate test data management. Without realistic and representative test data, testing becomes ineffective. You need strategies for efficient test data creation and management.

Measuring Testing Effectiveness and Continuous Improvement

Track key metrics like the number of defects found pre-release, the decrease in production incidents, and time saved through automation. These measurable results prove the value of testing and pinpoint areas for improvement.

Regularly review your testing processes and adapt your strategy based on data and feedback. Continuous improvement keeps your testing approach relevant and effective. This iterative process maximizes your testing ROI and boosts the overall quality of your microservices architecture. Want to enhance your testing and ensure the reliability of your microservices? Explore GoReplay and its powerful features for capturing and replaying live HTTP traffic. It can transform your testing process.