Breaking the Fear: Why Test in Production Actually Works

The idea of “test in production” often makes software developers nervous. It brings to mind system failures, unhappy users, and long debugging nights. But this view is out of date. Modern test in production strategies, done right, aren’t about reckless experiments. They’re about getting valuable insights and making more resilient systems. This approach is a major change, recognizing that a perfect copy of a production environment before launch is often unrealistic.

For example, user behavior is unpredictable. Your staging environment, no matter how detailed, can’t fully copy the many ways real users interact with your application. This means important performance issues and unusual cases often appear only after your software is live. Testing in production fixes this, letting you see and address these real-world scenarios.

Also, today’s applications are very complex, with their microservices and dynamic scaling. It’s almost impossible to predict every possible interaction before launch. Testing in production lets you check how your system handles real loads and traffic patterns. This real-world data is key to fine-tuning performance and ensuring stability.

Furthermore, this approach fits perfectly with agile software development, allowing for continuous improvement based on real-time feedback.

The rising use of DevOps and CI/CD pipelines makes testing in production even more important. DevOps and CI/CD emphasize fast updates and continuous deployment, making real-world testing a must. The growth of the testing-as-a-service market shows this trend. This market includes services for testing in production systems. It was valued at USD 4.54 billion in 2023 and is expected to grow at a CAGR of 14.0% through 2030. You can find more statistics here: Software Testing Statistics This growth shows the increasing value placed on testing in production as a vital part of a robust software development lifecycle.

Embracing the Learning Opportunity

Testing in production shouldn’t be seen as risky, but as a chance to learn. By using the right safeguards and focused testing methods, companies can reduce risk and get the most from real-world feedback. This change in mindset, from fear to smart experimentation, is key for building truly strong and user-focused applications. This approach helps you collect valuable data, improve your software based on real use, and ultimately, deliver a better product.

Your Production Testing Safety Net: Proven Techniques That Work

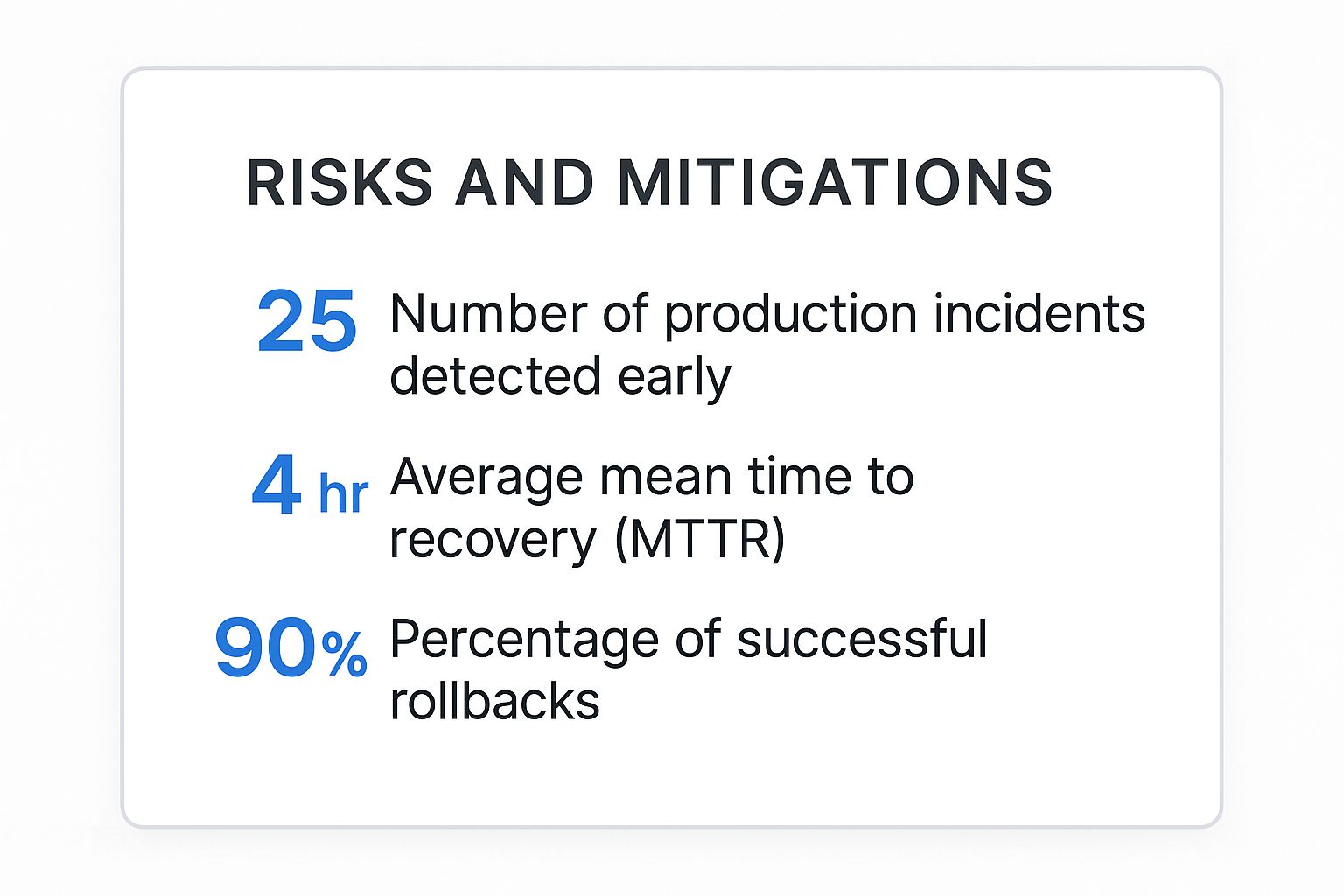

The infographic above highlights key data points regarding the effectiveness of production testing strategies. These include the number of incidents detected early, the average Mean Time To Resolution (MTTR), and the successful rollback rate. It visually demonstrates how proactive testing in production significantly reduces the impact of unforeseen issues.

With a high percentage of incidents caught early and a low MTTR, negative consequences for users are minimized. The high successful rollback rate further reinforces the safety and control these techniques offer.

Testing in production doesn’t have to be risky. Think of it as a safety net, allowing you to validate your application under real-world conditions. This controlled approach employs specific techniques that catch issues before they affect users, providing a robust way to test, learn, and iterate quickly.

Feature Flags: Your Control Panel for Change

Feature flags, also called feature toggles, act as on/off switches for your code. They allow you to release new features to a select group of users, test in the live environment, and quickly roll back if necessary.

This granular control minimizes the impact of bugs or unexpected behavior. For example, a new checkout flow could be enabled for only 10% of users initially. This allows performance monitoring before a wider rollout.

Canary Deployments: Dipping Your Toe in the Water

Canary deployments are like a controlled experiment. A new software version is deployed to a small segment of your production infrastructure. This lets you observe performance with real users and identify any problems before they reach a larger audience.

This approach offers valuable performance and stability data under real-world conditions, enhancing the development process.

Blue-Green Deployments: Instant Rollbacks for Peace of Mind

Blue-green deployments involve two identical production environments: “blue” (live) and “green” (staging). The new version is deployed to the “green” environment, thoroughly tested, then all traffic is switched from “blue” to “green.”

This enables near-instant rollbacks if problems arise, a crucial capability for maintaining user trust and minimizing downtime.

Circuit Breakers: Automated Protection Against Cascading Failures

Imagine a traffic surge overwhelming one of your microservices. This could cascade through your entire system. Circuit breakers prevent this by automatically “tripping” when a service fails. They divert traffic or return a default response, preventing a system-wide outage. This automated protection maintains system stability during unexpected events.

Monitoring and Alerting: Early Warning Systems for Production Issues

Comprehensive monitoring and alerting are crucial for any production testing strategy. Real-time monitoring of key metrics, such as error rates, latency, and resource usage, identifies potential problems early.

Automated alerts notify your team when critical thresholds are crossed, allowing rapid response and minimizing user impact. This proactive approach prevents small issues from becoming major incidents.

To help illustrate the strengths and weaknesses of each technique, consider the following comparison:

Production Testing Techniques Comparison Comparison of different production testing methods, their risk levels, implementation complexity, and ideal use cases

| Technique | Risk Level | Implementation Complexity | Best Use Cases | Rollback Speed |

|---|---|---|---|---|

| Feature Flags | Low | Low | New features, A/B testing | Very Fast |

| Canary Deployments | Medium | Medium | Incremental releases, performance testing | Fast |

| Blue-Green Deployments | Low | High | Major version releases, critical applications | Instant |

| Circuit Breakers | Low | Medium | Preventing cascading failures, microservices | Automatic |

This table summarizes the key attributes of each production testing method, allowing for quick comparison and selection of the best strategy for your specific needs. Choosing the right combination of these techniques is key to a robust and reliable production environment.

Building Teams That Thrive With Production Testing

Successfully implementing testing in production isn’t simply about having the right tools. It’s about cultivating a supportive team culture that embraces this approach. This shift requires a change in how teams operate, communicate, and learn from inevitable mistakes. This section explores the human element of production testing and how to build teams that not only survive but thrive.

Psychological Safety: The Foundation of a Learning Culture

The foundation of successful production testing is psychological safety. This environment allows team members to take calculated risks, admit mistakes, and seek help without fear of blame.

This encourages open communication and collaboration, which are crucial for quickly identifying and resolving issues. For instance, a developer who feels safe admitting a mistake will report it promptly, preventing a minor bug from escalating into a major problem.

Ownership and Accountability: Clarity Over Chaos

Clearly defined ownership models are essential. Each team member needs to understand their responsibilities and have the authority to make decisions within their area of expertise.

This clarifies roles, ensures issues are addressed promptly, and instills pride in the work, promoting proactive problem-solving. Interestingly, the global ratio of software testers is about 5.2 per 100,000 people, with Ireland having a significantly higher concentration. This highlights the growing importance of specialized testing roles. More detailed statistics can be found here: Software Testing Statistics

Communication Is Key: Keeping Everyone in the Loop

Effective communication is vital for any team, especially when testing in production. Establish clear communication channels for reporting incidents, sharing updates, and coordinating responses.

This could include dedicated Slack channels, regular stand-up meetings, or automated reporting tools. Open communication between development, operations, and other relevant teams minimizes misunderstandings and encourages collaborative problem-solving.

Learning From Mistakes: Post-Incident Reviews for Growth

Incidents will inevitably happen. Instead of assigning blame, treat them as learning opportunities. Conduct post-incident reviews to understand the root cause, identify systemic issues, and implement preventive measures.

This fosters a culture of continuous improvement and builds resilience. The focus should be on what went wrong and how to prevent it, rather than who made the mistake.

The Mindset Shift: From Fear to Data-Driven Confidence

Transitioning to a production testing mindset requires a shift from fear-based testing to data-driven experimentation. Encourage your team to see production as a valuable source of real-world data.

By analyzing user behavior and system performance in the live environment, teams can identify areas for improvement and optimize the application. This transforms testing from a necessity into a powerful tool for innovation and continuous learning.

The Production Testing Toolkit: Tools That Actually Matter

Building on solid testing principles, this section explores the essential tools for effective and safe testing in live environments. From observability platforms to feature flag management systems, these tools minimize risk and maximize learning. The focus here is on selecting tools that truly benefit your specific situation, not simply chasing the newest technology.

Observability: Illuminating Your Production Landscape

Observability platforms offer more than basic monitoring. They provide deep insights into application behavior in production, helping you understand why something happens, not just that it happened.

A strong observability setup includes:

- Metrics: Track key performance indicators (KPIs) like latency, error rates, and resource utilization, providing a quantitative view of your application’s health.

- Tracing: Follow requests through your system, pinpointing bottlenecks and dependencies to map your application’s inner workings.

- Logging: Capture detailed events and errors, providing valuable context for understanding incidents and creating a record of events.

Effective observability is vital for detecting anomalies, quick diagnosis, and understanding the impact of changes.

Feature Flags: Precision Control Over Your Releases

Feature flags offer precise control over new feature rollouts, allowing for production testing with minimal risk. This enables targeted testing with specific users, A/B testing, and quick rollbacks.

Robust feature flag management systems offer:

- Targeted Rollouts: Enable features for specific user segments based on demographics, location, or other criteria, allowing controlled experiments.

- A/B Testing: Compare different feature variations to determine optimal performance, improving user engagement and conversion.

- Kill Switches: Instantly disable features if problems arise, providing a safety net for quick reversions without full deployment rollbacks.

Traffic Management and Mirroring: Replicating Real-World Scenarios

Tools like GoReplay capture and replay real production traffic, creating a powerful testing resource. This facilitates realistic test environments, load testing, and debugging with actual user interactions.

Traffic mirroring tools provide:

- Realistic Load Testing: Simulate real-world traffic patterns to accurately test application capacity and resilience, ensuring it can handle peak demand.

- Session-Aware Replay: Reproduce user sessions faithfully, including cookies and headers, ensuring accurate reproduction of complex user flows.

- Debugging with Real Data: Replay specific user interactions that triggered errors for easier diagnosis and fixing of complex problems.

Check out this guide: How to Master API Load Testing With GoReplay for more details. You might also find this interesting: How to master…

Building Your Toolkit Incrementally: Start Small, Scale Smartly

Building your production testing toolkit doesn’t necessitate a huge initial investment. Start with tools addressing your most urgent needs and gradually expand as your testing strategy develops.

The following table provides a starting point for evaluating tools:

Production Testing Tools Evaluation Matrix: Comprehensive comparison of popular production testing tools, their features, pricing models, and integration capabilities

| Tool Category | Popular Options | Key Features | Team Size Fit | Integration Complexity |

|---|---|---|---|---|

| Observability | Datadog, New Relic, Prometheus | Metrics, tracing, logging | All | Varies |

| Feature Flags | LaunchDarkly, Optimizely, Split | Targeted rollouts, A/B testing, kill switches | Small to Enterprise | Low to Medium |

| Traffic Mirroring | GoReplay, tcpreplay | Load testing, session replay | All | Low to Medium |

Choosing the right tools depends on your team’s workflow and cultural readiness as much as the features offered. Prioritize tools that integrate seamlessly with your current systems and empower your team to test confidently and effectively in production.

Proving Value: How to Measure Production Testing Success

Knowing if your production testing strategy is working requires a solid plan for measuring success. This means choosing the right metrics, establishing baselines, and tracking progress. A data-driven approach lets you show the value of production testing to stakeholders and constantly improve your strategy.

Key Metrics: Focusing on What Matters

Effective measurement starts with picking the right metrics. These metrics should reflect the goals of your production testing strategy. For instance, if you’re aiming for better application stability, some key metrics might include:

-

Error Rates: Tracking how often and what kind of errors occur in production directly measures application stability. Fewer errors mean better reliability.

-

Mean Time To Recovery (MTTR): This metric measures how quickly your team fixes production incidents. A lower MTTR shows a more efficient incident response.

-

Deployment Frequency: This shows how often you can safely deploy new code. Higher deployment frequency means streamlined processes and quicker delivery.

If your goal is a better user experience, metrics like customer satisfaction scores and conversion rates become more important. It’s crucial to align your metrics with your overall objectives. You might find this helpful: How to master essential metrics for software testing.

Establishing Baselines and Tracking Progress

After choosing your metrics, establish baselines. This shows current performance before changing your production testing strategy, letting you objectively measure the impact of any adjustments.

Track these metrics to spot trends and areas for improvement. For example, a consistently high MTTR might mean you need better monitoring or incident response procedures.

Advanced Analytics: Optimizing Your Testing Strategy

Beyond basic tracking, advanced analytics can reveal deeper insights. By connecting different metrics, you might find unexpected relationships and uncover the root causes of production issues. Use this information to fine-tune your testing strategy and make it even better.

For example, analyzing user sessions before errors happen can highlight usage patterns that contribute to certain problems. This lets you target your production testing more effectively.

Communicating Value: Making Your Case to Stakeholders

Showing the Return On Investment (ROI) of production testing is crucial for getting stakeholder support. Present your findings in a way your audience understands. For technical audiences, focus on data and performance improvements.

For business stakeholders, highlight the impact on user satisfaction, business outcomes, and reduced risk. This approach clearly shows the value of your work and justifies continued investment in production testing. Also, consider the long-term benefits of better software quality and increased user trust. These often lead to real business value through a better reputation and customer loyalty. By showing how production testing positively affects the bottom line, you can effectively advocate for its continued use and improvement.

Navigating Common Roadblocks and Winning Over Skeptics

Adopting a “test in production” strategy offers significant advantages, but it also presents unique challenges. This section addresses common roadblocks and provides practical solutions for overcoming them, transforming skepticism into confident adoption.

Legacy Systems: Modernizing the Past

Many organizations work with legacy systems that weren’t designed for modern testing. These systems often lack the modularity and observability needed for safe and effective production testing. A gradual, strategic approach can make the transition more manageable.

Identify key areas where production testing offers the most value. Focus on new features or modules that interact with the legacy system. This allows you to gain experience and demonstrate value without significant risk.

For example, use feature flags to test new functionality with a small group of users, limiting the impact on the legacy system.

Compliance and Regulations: Testing Within Boundaries

Highly regulated industries, such as finance and healthcare, must adhere to strict compliance requirements. These regulations can make production testing seem daunting, but it’s not impossible with careful planning.

Integrate compliance considerations from the outset of your testing strategy. Collaborate with your compliance team to identify potential risks and ensure your testing procedures meet all regulatory requirements.

For example, anonymize sensitive data before using it in production tests. This protects user privacy while still providing valuable real-world insights.

Risk Aversion: Building Confidence Incrementally

A culture of risk aversion can sometimes hinder the adoption of production testing. Stakeholders may hesitate to embrace a practice perceived as inherently risky. The solution is to demonstrate value through incremental wins and gradually build confidence.

Start with low-risk testing strategies like canary deployments or feature flags, showcasing positive results. As confidence grows, gradually expand the scope and complexity of your testing. This measured approach reduces perceived risk and demonstrates tangible benefits.

Technical Debt: Addressing Underlying Issues

Existing technical debt can complicate production testing. Complex, poorly documented codebases make it difficult to implement necessary safeguards and monitor system behavior. Addressing technical debt strategically can pave the way for successful production testing.

Prioritize resolving technical debt in the areas you plan to test in production. For instance, improve logging and monitoring around a specific feature before introducing production tests. This focused approach makes technical debt manageable while supporting your testing goals.

When Test in Production Isn’t Ideal: Knowing Your Limits

While testing in production offers many benefits, it’s not always the best approach. For critical systems where even minor disruptions have severe consequences, alternative strategies may be more appropriate.

In these cases, invest in pre-production testing environments that closely mimic production conditions. Use tools like GoReplay to capture and replay real production traffic, creating realistic test scenarios without risking live users. This allows for thorough testing before release, minimizing the need for extensive production testing.

GoReplay is a valuable tool for optimizing your testing strategy. Its ability to capture and replay real production traffic enables realistic testing scenarios without the risks of direct production testing.